Mastering the Art of Data Engineering: Essential Skills for Success

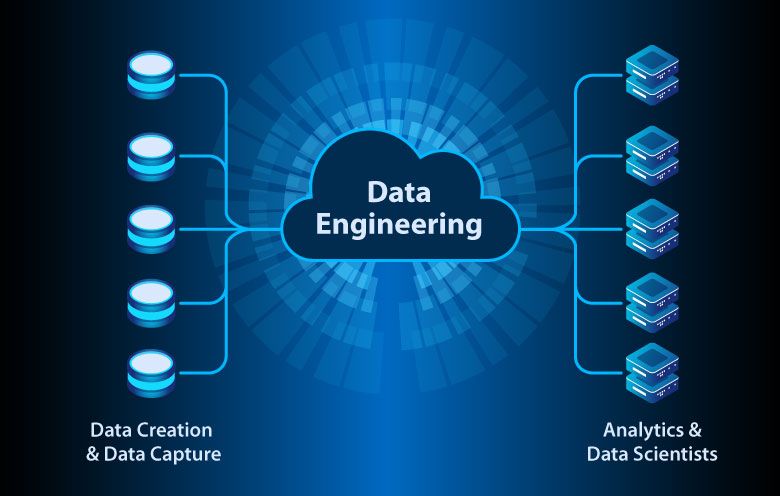

Data engineering is designing, building, and managing the infrastructure and systems that enable organizations to collect, store, process, and analyze large volumes of data. It involves combining technical skills, such as programming and database management, and understanding business needs and objectives. Data engineering is crucial today as organizations rely on data to make informed decisions, gain insights, and drive innovation.

In today’s digital age, data is being generated at an unprecedented rate. From social media posts and online transactions to sensor data from IoT devices, a vast amount of information can be harnessed for various purposes. However, raw data is often messy, unstructured, and scattered across different sources. This is where data engineering comes in. Data engineers are responsible for transforming raw data into a usable format by cleaning, organizing, and integrating it into a central repository. This enables organizations to extract valuable insights and make data-driven decisions.

Real-life examples of data engineering can be seen in various industries. For instance, in the healthcare sector, data engineers play a crucial role in managing electronic health records (EHRs) and ensuring the security and privacy of patient data. Data engineers help analyze customer behavior and preferences in the retail industry to personalize marketing campaigns and improve customer experience. Data engineers build robust systems for fraud detection and risk management in the financial sector. These examples highlight the importance of data engineering in enabling organizations to leverage their data assets effectively.

Essential Skills for Data Engineers: Technical and Soft Skills

Data engineering requires a combination of technical skills and soft skills. On the technical side, data engineers need to have a strong foundation in programming languages such as Python or Java and experience with database management systems like SQL or NoSQL. They should also be familiar with data integration tools, ETL (Extract, Transform, Load) processes, and big data technologies like Hadoop and Spark.

In addition to technical skills, data engineers also need to possess certain soft skills that are essential for success in their role. One of the most important soft skills for data engineers is problem-solving. They need to be able to analyze complex data-related challenges and come up with innovative solutions. Attention to detail is also crucial, as data engineers often work with large datasets and must ensure accuracy and consistency.

Communication skills are another important aspect of being a successful data engineer. Data engineers often work as part of a team, collaborating with data scientists, analysts, and other stakeholders. They need to effectively communicate their ideas, explain technical concepts to non-technical colleagues, and understand the requirements and objectives of the business.

Data Modeling and Database Design: Key Concepts and Best Practices

Data modeling creates a conceptual representation of the data stored in a database. It involves identifying the entities, attributes, and relationships between them. On the other hand, database design focuses on translating the conceptual model into a physical database structure.

Key concepts in data modeling include entities, which are the objects or concepts represented in the database; attributes, which describe the characteristics of the entities; and relationships, which define how entities are related to each other. Data engineers must have a solid understanding of these concepts to design efficient and effective databases.

When it comes to best practices for data modeling and database design, there are several guidelines that data engineers should follow. First and foremost, it is important to clearly understand the business requirements and objectives before starting the design process. This will help ensure the database structure aligns with the organization’s needs.

Normalization is another important concept in database design. It involves organizing data into tables so redundancy is minimized and data integrity is maintained. By eliminating duplicate data and ensuring that each piece of information is stored in only one place, data engineers can improve the efficiency and accuracy of the database.

Extract, Transform, Load (ETL) Processes: Strategies for Efficient Data Integration

ETL processes are a critical component of data engineering. ETL stands for extract, transform, and load and refers to extracting data from various sources, transforming it into a usable format, and loading it into a target database or data warehouse.

One of the key strategies for efficient data integration is to automate the ETL process as much as possible. This can be done using tools and technologies that allow for the seamless extraction, transformation, and loading of data. Automation saves time and effort and reduces the risk of human error.

Another important aspect of efficient data integration is data quality. Data engineers must ensure that the data extracted from different sources is accurate, complete, and consistent. This involves performing data cleansing and validation to identify and correct any errors or inconsistencies in the data.

Data profiling is another strategy that can be used to improve the efficiency of ETL processes. Data profiling involves analyzing the data’s structure, content, and quality to gain insights into its characteristics. This can help identify any issues or anomalies in the data that must be addressed before loading it into the target database.

Big Data Technologies: Hadoop, Spark, and More

Big data technologies have revolutionized the field of data engineering by enabling organizations to process and analyze massive volumes of data quickly and efficiently. Two of the most popular big data technologies are Hadoop and Spark.

Hadoop is an open-source framework that allows for distributed processing of large datasets across clusters of computers. It consists of two main components: Hadoop Distributed File System (HDFS), which is used to store large files across multiple machines, and MapReduce, which is used to process and analyze the data in parallel.

However, Spark is a fast and general-purpose cluster computing system that provides in-memory processing capabilities. It is designed to be quicker and more flexible than Hadoop, making it ideal for real-time data processing and machine learning applications.

In addition to Hadoop and Spark, there are several other big data technologies that data engineers should be familiar with. These include Apache Kafka for real-time data streaming, Apache Cassandra for distributed database management, and Apache Hive for data warehousing and SQL-like querying.

Data Warehousing: Designing and Implementing Effective Data Storage Solutions

Data warehousing involves designing and implementing a centralized repository for storing and managing large volumes of structured and semi-structured data. It provides a way to organize and consolidate data from different sources, making it easier to analyze and extract insights.

When designing a data warehouse, data engineers need to consider several factors. One of the key considerations is scalability. The data warehouse should be able to handle increasing volumes of data as the organization grows. This can be achieved using distributed architectures and technologies allowing horizontal scaling.

Data security is another important aspect of data warehousing. Data engineers must ensure that the data stored in the warehouse is protected from unauthorized access or breaches. This involves implementing robust security measures such as encryption, access controls, and regular backups.

Data integration is also a critical component of effective data warehousing. Data engineers must ensure that the data from different sources is integrated seamlessly into the warehouse, allowing easy analysis and reporting. This can be achieved through ETL processes and data integration tools.

Data Quality and Governance: Ensuring Accuracy, Consistency, and Compliance

Data quality refers to data accuracy, completeness, consistency, and reliability. Organizations must have high-quality data to make informed decisions and gain meaningful insights. Data engineers ensure data quality by implementing data cleansing and validation processes.

On the other hand, data governance refers to the overall management and control of data within an organization. It involves defining policies, procedures, and standards for data management and ensuring compliance with regulatory requirements. Data engineers must be familiar with data governance principles and practices to ensure that data is managed effectively and by legal and ethical standards.

Data privacy is a major concern today, with increasing regulations such as Europe’s General Data Protection Regulation (GDPR). Data engineers must be aware of these regulations and ensure that the data they handle is protected and used responsibly. This involves implementing security measures, obtaining consent from individuals when collecting their data, and providing transparency about how it will be used.

Cloud Computing and Data Engineering: Leveraging AWS, Azure, and Google Cloud

Cloud computing has revolutionized the field of data engineering by providing scalable and cost-effective solutions for storing, processing, and analyzing large volumes of data. Cloud service providers such as Amazon Web Services (AWS), Microsoft Azure, and Google Cloud offer various services and tools that data engineers can leverage.

One of the key benefits of cloud computing in data engineering is scalability. Cloud platforms allow organizations to scale their infrastructure up or down based on their needs without upfront investment in hardware or infrastructure. This makes it easier for data engineers to handle large volumes of data and accommodate changing business requirements.

Another benefit of cloud computing is flexibility. Cloud platforms offer a wide range of services and tools that can be customized to meet specific needs. Data engineers can choose from various storage options, databases, analytics tools, and machine learning frameworks to build their data engineering solutions.

However, there are also challenges associated with cloud computing in data engineering. One of the main challenges is data security and privacy. Organizations must ensure that their data is protected from unauthorized access or breaches when stored in the cloud. This involves implementing robust security measures and complying with regulatory requirements.

Machine Learning and Artificial Intelligence: Integrating Data Engineering with AI/ML

Machine learning (ML) and artificial intelligence (AI) are rapidly transforming various industries by enabling organizations to automate processes, gain insights from data, and make predictions or recommendations. Data engineering plays a crucial role in the success of AI/ML projects by providing clean, organized, and reliable data for training and testing ML models.

Data preparation is a critical step in the ML workflow, and data engineers are responsible for ensuring that the data is in the right format and quality for training ML models. This involves cleaning the data, handling missing values, encoding categorical variables, and normalizing numerical features.

Data engineers must also be familiar with ML frameworks and tools such as TensorFlow, PyTorch, or Scikit-learn. These tools allow data engineers to build and deploy ML models and perform tasks such as feature engineering, model evaluation, and hyperparameter tuning.

In addition to ML, data engineers can leverage AI technologies such as natural language processing (NLP) or computer vision to extract insights from unstructured data such as text or images. This requires knowledge of specialized tools and techniques for processing and analyzing unstructured data.

Career Paths and Opportunities in Data Engineering: Tips for Advancement and Growth

Data engineering offers many career paths and opportunities for advancement and growth. Depending on their interests and expertise, data engineers can work in various industries, such as healthcare, finance, retail, or technology.

One common career path for data engineers is to start as a junior or entry-level data engineer and gradually progress to more senior roles such as old data engineer or data engineering manager. With experience and expertise, data engineers can also move into specialized functions such as data architects or big data engineers.

Continuous learning and staying up-to-date with the latest technologies and trends are crucial for career advancement in data engineering. Data engineers should invest time learning new programming languages, tools, and technologies and developing their soft skills, such as communication and problem-solving.

Networking and building connections within the data engineering community can also open up opportunities for career growth. Attending industry conferences, joining professional organizations, and participating in online forums or communities can help data engineers stay connected with peers and learn from others in the field.

In conclusion, data engineering is a critical discipline that enables organizations to collect, store, process, and analyze large volumes of data. It requires technical skills, such as programming and database management, and soft skills, such as problem-solving and communication. Data engineers ensure data quality, design efficient databases, and integrate data from various sources. They must also stay up-to-date with the latest technologies and trends to leverage tools such as Hadoop, Spark, or cloud computing. With the increasing importance of data in today’s world, there are numerous career paths and opportunities for growth in data engineering.